Kernel Coverage with Testwell CTC++

Code Coverage of Linux Kernel

The kernel manages I/O (input/output) requests from software, and translates them into data processing instructions for the central processing unit and other electronic components of a computer (c.f. Wikipedia).Proof of Concept

This capability is a part of CTC++ Host-Target add-on package. Kernelcoverage is a specialization of CTC++ Host-Target. The same components and techniques as in CTC++ Host-Target are used, but the target environment is kernel-space code on the host itself. The Kernelcoverage component also describes and suggests a technique how the collected execution counter data can be transferred from kernel space to user space.

Measuring coverage in kernel-space code is generally a challenge to instrumentation-based coverage tools. In kernel-space code the instrumented probes cannot use any library functions or systems calls while in use-space code they can be used. The CTC++ way of doing the instrumentation and its run-time support layer assumes only basic C with no usage of system services. Thus it can be smoothly executed in kernel space.

As a proof of concept for Kernelcoverage we have instrumented a complete Linux kernel and have run a certain test suite on it. Here we describe the use case. You can also view the resultant CTC++ coverage report.

The starting point was a Linux 2.4.0 kernel, consisting of 227 C code files (158000 source lines) plus the necessary header files. Originally their compilation took about 9 minutes (wall clock time) resulting in a 545 kB kernel.

When all these 227 code files were instrumented (CTC++´s widest coverage instrumentation mode ´multicondition coverage´ was used) and the instrumented files compiled, it took about 16 minutes (+78%). The resultant instrumented kernel was 916 kB (+68%). [This was taken from compressed images. Comparing the uncompressed images, the size increase was 44%.]

The publicly available Linux kernel test suite from Hewlett Packard Enterprise version ltp-20001012 was executed both with the original non-instrumented kernel and with the instrumented kernel. No human-noticeable slowing down of performance could be observed. When measuring with time(1) it seemed that 16% more time was spent in the instrumented kernel than in the original kernel when executing this test suite.

The test suite itself did not bring very high test coverage over all these 227 C files. Booting and login made already 21% and running the test suite increased it with 4% to 25%. The coverage percentage, however, is not the point here (and CTC++ has no control over it). The point is to show that a complete kernel can be instrumented with Testwell CTC++, even its most time-critical portions, and the execution profile can be obtained.

Also, this exercise gives you an idea what overhead CTC++ brings on the instrumentation/compilation time, on the instrumented executable size, and on the instrumented code execution time. Even though we did the instrumentation so that the CTC++ overhead would be maximal, it is still quite reasonable. And it may well be that we are not interested in all those 227 files but measuring only a couple of files would suffice, or a lesser instrumentation mode than ´multicondition coverage´ would suffice, in which case these overheads would be lower.

See Kernelcoverage report for viewing the CTC++ coverage report of this exercise. Two browser windows are opened, two frames in each. The left frame in the first window shows the coverage summaries on file level. Clicking on a file name focuses the right frame on the clicked file and gives you a zoom-in view of the functions of the file. Clicking the links in the right frame focuses the second window on the detailed execution profile listing of the pertinent file or function. Finally, in the right frame of the second window you can view the actual source file. The top frame shows all the 227 files. The other frames contain c. 8 files each, that is, you need to start navigating from the top frame again, for seeing the files that are not near enough to your current focus point.

Benefits

- Support of all compilers/cross-compilers

- Support of all embedded targets and microcontrollers

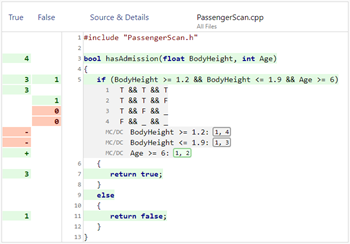

- Analyses for all coverage levels up to MC/DC and MCC Coverage

- Compliant for safety critical development

- Tool Qualification Kit available

- Certified by TÜV SÜD

- Simplifies analysis of Penetration tests

- Support for C, C++, Java, and C#

- Performs Kernel Coverage

- Integrations in many tool chains and testing environments

- Broad platform support

- Works with all Unit Testing Tools

- Integrations in many IDEs

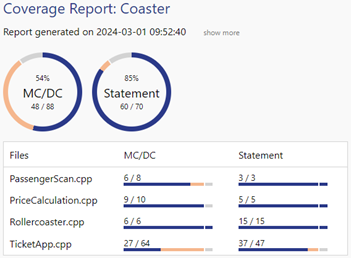

- Clear and meaningful reports

- Very easy to use

- Thousands of licenses succesfully in use for safety critical development

- Proven customer success

- Live-Presentations, Trainings and Online-Presentations

- Free evaluation licenses

Frequently Asked Questions